ETL from Webhook to Databricks in a few clicks

- Build scalable, production-ready data pipelines in hours, not days

- Extract data from Webhook and load into Databricks without code

- Complete your entire ELT pipeline with SQL or Python transformations

How to get started with our Webhook integration

See it in action

Ready-Made Data Workflow Kits with Databricks

Everything you need to simplify advanced data integration

Zero Infrastructure to Manage

Managed SQL/Python Modeling

Predictable Value-Based Pricing

Reduced Data Development Waste

No-Code Any Data Ingestion

Efficient Database Replication

Infinite Scale

Integrated Data Activation

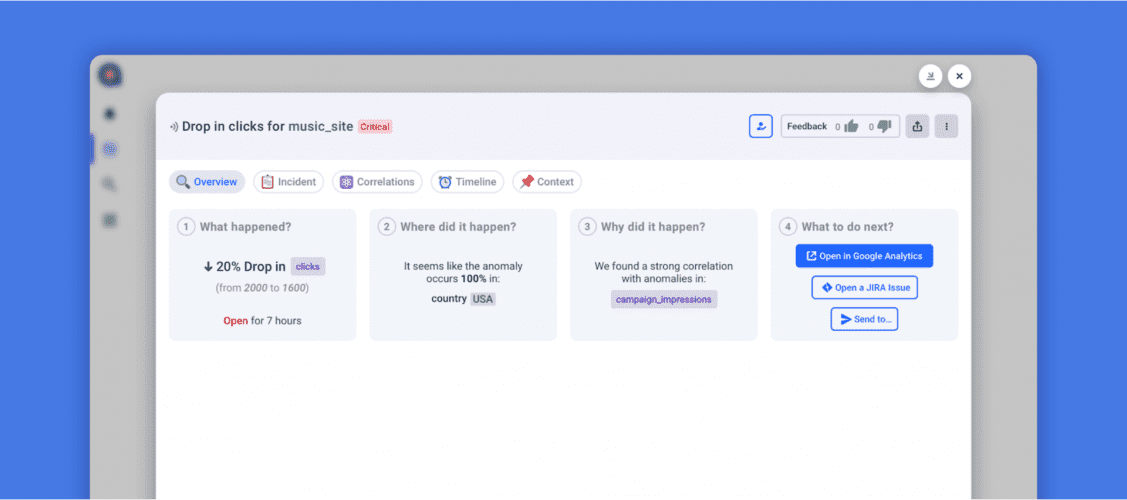

Proactive Troubleshooting

Bring all your data together. Integrate data from anywhere

Greg Robinson

Staff Data Scientist

Load Webhook to any data lake or warehouse

FAQ

Using Rivery’s data connectors is very straightforward. Just enter your credentials, define the target you want to load the data into (i.e. Snowflake, BigQuery, Databricks or any data lake and auto map the schema to generate on the target end. You can control the data you need to extract from the source and how often to sync your data. To learn more follow the specific docs.

Yes. All data connectors within Rivery comply with the highest security and privacy standards, including: GDPR, HIPPA, SOC2 and ISO 27001. In addition, when the data flows into your target data warehouse, you can configure it to do so via your own cloud files system vs. Rivery.

The most popular data connectors are for use cases like marketing, sales and finance. These include Salesforce, HubSpot, Google Analytics, Google Ads, LinkedIn Ads, Facebook Ads, TikTok and more.

Rivery supports both CDC database replication and Standard SQL extraction so you can choose the method that works best for you. Learn more here.

Power up your data integration