Data orchestration tools are instrumental in today’s data economy. Data teams are forced to do more with less and don’t have the luxury to jump between tools just to collect, unify, and categorize siloed data.

They need to be agile and efficient and they need their orchestration tool to work with them to overcome the struggle of collecting data from independent sources that are restricted and inaccessible.

So what’s the solution? A data orchestrating tool can help data teams overcome that challenge in minutes.

In this article, we cover the most popular data orchestration tools on the market, so you can make up your own mind about what’s the best solution for you, your team, and your business.

What Is a Data Orchestration Tool?

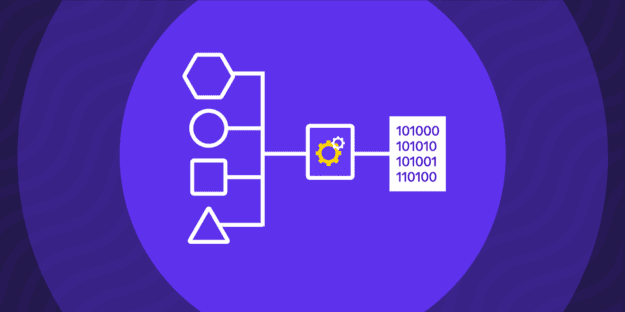

Data orchestration tools automate the integration of data from various sources by collecting, organizing, and merging it into a usable format. They unify data from diverse centers, including cloud services, legacy systems, and data lakes, into a single, accessible platform for analysis.

These tools streamline the process of making data ready for analysis by connecting different data storage and processing systems, ensuring a cohesive data management solution across your organization.

The process looks like this:

Automate: Establish a data pipeline and allow the tool to operate the workflow.

Monitor: Follow the workflow closely from different angles to ensure its secure flow. Identify possible issues and notify in real-time.

Scale: The orchestration tools use parallel processing and reusable pipelines to scale the operations.

Key Steps in Data Orchestration

Data orchestration tools follow a set of 4 key steps that go as follows:

- Collection: This is the initial phase where the tool collects data in real time from many sources, separating reliable from unreliable ones.

- Transformation: The second phase mainly processes the collected data in terms of format and language. The data needs to be transformed in the correct format and language or data layer to continue to the next step.

- Combine, Categorize, & Advance: The transformed data gets combined and categorized in the third phase. Additionally, this phase includes advancing the data to be easy to use after it reaches the appropriate category.

- Activation: This is the final step of the data orchestration tool function when the data becomes available for real-time analysis and strategy-making.

Why Use Data Orchestration?

Data orchestration tools significantly optimize the business workflow by collecting, processing, analyzing, and storing data. Here are some reasons to start using one:

- Cost-effective solution: Data orchestration tools save you money with their data maintenance solutions, as you will not have to hire people for the role.

- Minimizes human errors: The tools operate efficiently and are able to maintain a large influx of information from different sources in real time.

- Compact solutions: Many businesses have many data sets and systems that need relocation and additional warehouses. Data orchestration tools are a three-in-one solution since they gather, collect, and prepare data for further analysis.

15 Best Data Orchestration Tools

The market is flooded with many data orchestration tools, but we’ve selected the 15 best tools you should consider for your business:

1. Rivery

Rivery is one of the most compatible data orchestration tools for SaaS businesses as it allows connecting and orchestrating data sources, both domestic and third-party. With this tool, you can create a seamless ecosystem and work remotely without any worries because all your data is stored in the cloud with unified access to all members.

Features:

- Digital Cloud Data Lake: Large enterprises can manage all data and distribute it across teams and departments for immediate use.

- DataOps Management: Users have complete control over their data management and can create templates for blueprint data models.

- Reverse ETL: Push data back into specific apps according to insights once it’s been made fully operational in the warehouse.

- Significantly improves data management.

- Saves you time and money while eliminating manual tasks.

- Full control over the business thanks to real-time data insights.

- It may take some time to master the vast number of features.

Pricing:

Rivery has a unique pricing model, charging per “RPU” or Rivery Pricing Unit. The monthly cost is the total sum of RPUs used during the month. There are 3 business plans:

- Starter: $0.75 per RPU

- Professional: $1.20 RPU

- Enterprise: Customizable (contact sales).

2. Keboola

Keboola is another end-to-end ETL solution ideal for larger teams looking to unify their data and make it usable. It is especially recommended for large businesses in need of more data warehouses.

Features:

- 100+ Integrations: Keboola has got you covered with ready-to-use integrations to move data across your most used apps.

- Data Catalog: If management is your top focus, you can use Keboola’s Data Catalog to simplify data asset management.

- Pre-built connectors: There are over 200 pre-built connectors for data extraction and loading through a couple of clicks.

- Excellent orchestrating and other data management tools in one.

- Build data pipeline orchestrations using code or no-code features.

- Convenient learning curve.

- Smaller businesses may not benefit from all the features.

Pricing:

Keboola has a unique pricing model and charges per minute. Also, it comes with a Free Tier plan (120 minutes) where you get to experience all the top features for free. Once the free plan expires, you can buy more “credits” for 14 cents.

3. Improvado

Improvado gained popularity quickly and was massively adopted by many developers because it transforms data into the most suitable format for users. The automated dashboards and reports make Improvado a go-to for digital marketers, data analysts, and digital specialists.

Features:

- Discovery and Insights: AI-driven insights let you oversee all user engagement and the latest trend reports regarding your business.

- Data Cleaning: Ensure all your data is concise and coherent by removing duplicates or unnecessary information.

- Team Connection: Improvado allows you to connect your clients, team, and stakeholders on one platform to simplify business processes.

- Offers accurate predictive analysis based on collected data.

- Allows you to manage and integrate data from a large number of integrations.

- Comes with an amazing set of features that speed up the ELT process.

- The pricing plan is unpredictable.

Pricing:

There are 3 individual plans—Growth, Advanced, and Enterprise. Each offers a different set of capabilities, so you should choose according to your organization’s size and daily business needs. You can book a demo or opt for the Enterprise plan and decide on a price with the Sales team.

4. Apache Airflow

Apache Airflow is a robust data orchestration tool and one of the most popular ETL orchestration tools operating on Python. Also, it’s an open-source tool featuring DAGs (Directed Acyclic Graphs) that allow scheduling and automating data.

Features:

- Pure Python: Python is highly flexible when it comes to transformations. You can seamlessly run data processes with Spark, for example.

- Open-source: As opposed to other orchestration tools, Apache Airflow lets you request code modifications and make changes.

- Scalable orchestration: Allows multi-node and parallel orchestration.

- Third-party integrations with the latest platforms.

- A comprehensive set of data orchestration tools that can handle any data workflow size.

- Excellent visibility and control over your business.

- It may be a bit complex to navigate.

Pricing:

Apache Airflow is an open-source tool, which means it’s free to use. All you have to do is install the software and get started.

5. Prefect

Prefect is another Python-based orchestration tool that allows full command over data workflow, building quality infrastructure, and reacting when needed. It implements custom retry capabilities and caching to help reduce human errors.

Features:

- Prefect UI: Allows you to monitor, configure, analyze, and coordinate data workflows.

- API Servers: Provide flow run instructions based on collected state information from workflows.

- Control Panel: Thanks to the Control Panel, you will always have full visibility over your workflows.

- Versatile transformation thanks to Python.

- Convenient data management tool that offers many subfeatures.

- Saves developers time and allows them to focus on other tasks.

- Steep learning curve.

Pricing:

Prefect offers three pricing plans—personal, organizational, and enterprise. The personal plan is free forever, the organizational one costs $405 monthly if paid yearly, and the enterprise plan doesn’t have a set price (contact sales).

6. Dagster

Dagster is a versatile data management and orchestration tool that comes in handy during each stage of the data development cycle. It’s a sustainable asset to businesses because it assists in overcoming possible challenges that arise from various infrastructures.

Features:

- Auto-materializing Assets: Independently creates and updates key data. If you ever experience any accident or a pipeline fail, you will not have to fix it manually—Dagster has you covered.

- Relief From Overwhelming Infrastructures: Dagster significantly assists in overcoming any difficulties arising from different infrastructures for local and production environments.

- ML Framework Integrations: If you plan on using machine-learning frameworks, you can make them work with Dagster.

- Innovative and highly functional data management tool, especially for businesses using multiple infrastructures.

- Allows you to have more control over business data pipelines.

- Convenient for all businesses.

- Building pipelines is a bit difficult.

Pricing:

Dagster offers 3 pricing plans convenient for every budget:

- Solo: $10 for 7,500 DC (Dagster credits). The cost per additional credit is $0.04.

- Team: $100 for 30,000 DC. Cost per additional credit is $0.03.

- Enterprise: Customizable.

7. Mage

Mage is one of the best data management tools when it comes to visibility, especially regarding scheduling and managing data pipelines. It operates on Python, SQL, and R and lets you build in real time.

Features:

- Third-party Data Integration: Mage allows you to join data coming from third parties.

- Constant Monitoring, Orchestrating, and Functioning: Using Mage, you can orchestrate thousands of pipelines and track their progress.

- Instant Feedback: If you have trouble coding, Mage will give you instant feedback on your progress.

- Three programming languages available.

- Standalone file containing modular code you can reuse and test with data validations.

- Excellent user interface.

- Not recommended for start-up businesses during the initial stages.

Pricing:

While the platform used to offer pricing plans, they’ve been taken down since then. For now, you can try Mage out for free.

8. Google Cloud Composer

Google Cloud Composer is a user-friendly tool for creating and managing workflows in the Google Cloud ecosystem. With its intuitive interface, you can build data pipelines and orchestrate complicated data without comprehensive coding knowledge.

The platform offers a vast library of pre-built connectors, enabling seamless integration with various data sources and services, including Rivery, BigQuery, Dataproc, etc. The platform also prevents vendor lock-in with its multi-cloud functionality.

Additionally, Google Cloud Composer’s scalable infrastructure automatically adjusts resources based on workload demands to ensure optimal performance.

However, although the platform offers robust features and reliable performance, you may find the advanced error-handling capabilities limited versus other tools.

- Intuitive user interface for designing and managing workflows.

- Built on Apache Airflow open source project and operated using Python.

- A comprehensive library of pre-built connectors.

- Scalable infrastructure with automatic scaling.

- Reliable customer support team.

- Limited advanced error handling features.

Pricing Model:

Google Cloud Composer has a pay-as-you-go pricing model based on resource usage, with costs calculated for computing, storage, and networking resources consumed during workflow execution.

New users get $300 of free credit on Composer and other Google Cloud products in the first 90 days.

9. Shipyard

Shipyard is a tool known for offering seamless data sharing. To provide such a service. Shipyard comes with on-demand triggers, automatic scheduling, and built-in notifications with no need for code configuration or similar adjustments.

Features:

- Detailed History Logging: You have a history log report that gives you an overview of the entire data workflow.

- Connects Over 50 Low-code Integrations with Data Stacks: You can count on seamless integrations without any obstructions.

- Data Orchestration From Third Parties: By third parties, we mean Lambda, Zapier, Cloud Functions, DBT Cloud, and the like.

- Shipyard can solve complex data orchestration in less than 5 minutes.

- You don’t have to manually re-code or add to the existing code in case of complex situations.

- Share repeated or adjusted solutions with other members of the group in real-time.

- May require some time to understand the way it works.

Pricing:

Shipyard comes with two pricing plans:

- Free Plan: 10 hours of free use of Shipyard services per month

- Team Plan: Customizable with a $50 starting price per month.

10. AWS Glue

AWS Glue is a fully managed ETL service offered by Amazon Web Services; it’s designed to facilitate building and managing data pipelines. With its serverless architecture, AWS Glue removes infrastructure provisioning and management, allowing you to concentrate on data transformation tasks.

The platform has built-in ETL capabilities for data extraction, transformation, and loading, making it easier for users to prepare and analyze their data. AWS Glue also integrates with other AWS services—such as Amazon S3, Redshift, and Athena—for comprehensive data integration and analysis workflows.

Nonetheless, while AWS Glue provides a user-friendly experience and extensive documentation, you may find the customization options to be limited compared to self-hosted ETL solutions.

- Fully managed service with serverless architecture.

- Rich set of built-in ETL capabilities.

- Seamless integration with other AWS services.

- Extensive documentation and community support.

- Limited customization options.

Pricing Model:

AWS Glue offers a pay-as-you-go pricing model based on the number of Data Processing Units (DPUs) consumed and the duration of job executions. The platform will bill you per second, with no upfront costs or long-term commitments.

11. Luigi

Luigi is one of the best tools when it comes to complex data orchestration. It offers outstanding opportunities for developing and monitoring data to simplify the duties of the developers, helping them save time on manual tasks.

Features:

- Complex Infrastructure: Luigi uses complex infrastructure, including A/B test analysis, internal dashboards, recommendations, and external reports to manage complex tasks.

- User-friendly Web Interface: With Luigi, you can search, filter, and prioritize seamlessly.

- Open-source: Because it’s open-source, Luigi is a great option for developers.

- Ideal for heavy infrastructures as the tool is handy in complex situations.

- You can integrate multiple tasks in a single pipeline.

- No need to worry about the backend.

- Scalability issues.

Pricing:

Given that it’s open-source, Luigi is free for anyone to use. You just have to grasp the learning curve first.

12. Astronomer

Astronomer is a promising data orchestration and management tool because it puts developers first and ensures that the data flows seamlessly. The main purpose of the tool is to minimize data downtime, which it does effortlessly, thanks to the handful of useful features.

Features:

- Simple DAGs Protection: You can use the Airflow upgrades and custom high-availability configs for faster and seamless protection.

- Visibility: Astro is your second set of eyes on analytic views, easy interfaces for logs, and possible alerts across all environments.

- CI/CD Workflow: This feature helps you automate processes like code changes, builds, and testing.

- Allows you to create an outstanding environment with robust infrastructure.

- Helps you write DAGs faster.

- Comes with excellent customer support.

- A bit complex pricing table.

Pricing:

Astronomer uses the pay-as-you-go pricing model and charges $0.35-$0.77 per deployment per hour. However, you can also pay according to your worker count.

13. Azure Data Factory

Azure Data Factory is a cloud-based data integration service provided by Microsoft Azure. The tool enables you to create, schedule, and orchestrate data workflows across various sources.

With its visual interface and drag-and-drop functionality, you can easily design complex data pipelines without the need for vast coding. Azure Data Factory also offers built-in connectors for integrating with a wide range of data sources and services, both on-premises and in the cloud.

In addition, the platform seamlessly integrates with other Azure services like Azure Blob Storage, Azure SQL Database, and Azure Synapse Analytics. This enables end-to-end data processing and analytics workflows.

While Azure Data Factory provides extensive documentation and community support resources, you may find the initial learning curve to be steep.

- Intuitive visual interface for designing data workflows.

- Built-in connectors for seamless integration.

- Integration with other Azure services.

- Extensive documentation and community support.

- Steeper learning curve for beginners.

Pricing Model:

Azure Data Factory has a consumption-based pricing model. The platform will charge you for data integration and data movement activities based on the volume of data processed and the number of pipeline runs executed.

14. SAP Data Intelligence

SAP Data Intelligence is a data orchestration and integration platform offered by SAP. The platform has advanced data transformation capabilities—including data cleansing, enrichment, and harmonization—to provide data quality and consistency.

Moreover, SAP Data Intelligence integrates with SAP and non-SAP data sources, as well as supports hybrid and multi-cloud environments. As a result, it delivers flexibility and scalability to meet diverse business requirements.

The tool also offers enterprise-wide data management, data discovery of business information, and data asset management.

- Unified platform for data orchestration, integration, and machine learning.

- Advanced data transformation capabilities.

- Integration with SAP and non-SAP data sources.

- Comprehensive training, documentation, and support services.

- Higher initial setup and customization efforts.

Pricing Model:

SAP Data Intelligence offers flexible licensing options, including subscription-based pricing and enterprise agreements tailored to your specific requirements.

15. Databricks

Databricks is a unified analytics platform designed for data engineering, data science, and machine learning workloads. Built on Apache Spark, Databricks offers integration with Spark for high-performance data processing and analytics at scale.

The platform offers a collaborative workspace with built-in version control, notebooks, and interactive visualizations. This allows teams to collaborate effectively on data projects.

Databricks also provides extensive documentation, training, and community support resources to help you maximize the value of your data.

Databricks offers robust features and capabilities, but some users may find the cost to be higher compared to other orchestration tools—especially for large-scale deployments.

- Unified analytics platform for data engineering, data science, and machine learning.

- Native integration with Apache Spark.

- Collaborative workspace with built-in version control.

- Extensive documentation, training, and community support.

- It can be expensive for large-scale data processing tasks.

Pricing Model:

Databricks offers a subscription-based pricing model with tiered plans. Pricing is based on the number of virtual machines (VMs) provisioned and the duration of usage.

How to Choose the Right Data Orchestration Platform

Here is our 3 step criteria when choosing the right orchestration tool:

- The pricing plan has to correspond to the features enlisted. You cannot afford unnecessary financial exposures.

- Your goals should match the features. You will not benefit from a platform with robust features you cannot implement.

- The platform should match your skills and knowledge. Get a platform you will not need a course to operate and reduce downtime.

Orchestrate Your Data with Rivery

Rivery delivers unmatched performance, enabling you to generate faster insights by aggregating, transforming, and creating data directly from your cloud data warehouse.

With integration to over 120 domestic sites and the ability to create custom data pipelines with API support, your data orchestration needs are covered.

Rivery makes building complex end-to-end ELT pipelines a breeze, whether you prefer using custom code or a no-code approach.

Don’t just take our word for it. Explore Rivery’s capabilities and see for yourself how Rivery stands out among the best data orchestration tools on the market.

Minimize the firefighting. Maximize ROI on pipelines.