Hold onto your spreadsheets because we’re about to embark on a journey through time. We’ll be delving deep into the past of traditional data warehouses, exploring the modern data stack, and even daring to speculate about the future of data management.

Intrigued? You should be. Whether you’re a seasoned data scientist, a budding data engineer, or a knowledge-hungry enthusiast, this is your guide to understanding the modern data stack.

Brief History of Data Management

Data management existed for as long as people had data (Library of Alexandria, anyone?), but it wasn’t until the dawn of the digital age that things really began to take off.

- 1950s: Data management was still in its infancy. Mainframe computers were used to store data in tabular form. This data was accessed manually, using punch cards with holes that corresponded to different pieces of information.

- 1970s: The advent of relational databases revolutionized data management. Relational databases store data in tables, which makes it easier to query and analyze. SQL (Structured Query Language) was developed to interact with relational databases – a tool still widely used today.

- 1980s – 2000s: This era saw data warehouses becoming an integral part of big companies’ data architecture.

Challenges Addressed by Data Warehouses

In the early days of data management, companies relied on disparate systems to store and process their data. Business users have no way to get together all the different pieces of information they need to make informed decisions. And that is where data warehouses come in.

A data warehouse is a repository of all the company’s data, which can be accessed by multiple users and departments. They provide a single source of truth, allowing companies to easily access and analyze their data.

Data warehouses were created to address the following challenges:

- Data Silos: Before data warehouses, data was stored in isolated systems across the organization. By providing a centralized repository for data, data warehouses made consolidated data analysis and reporting possible.

- Advanced Data Analysis: They also introduced the concept of data modeling and schemas, which provided structure to the data and enabled more complex analyses.

- Performance: Running complex queries on transactional databases could be resource-intensive and slow. Data warehouses store data in a structured format and use optimized query processing techniques, making it easy to access large amounts of data without slowing down the performance.

- Consistency: With data coming from multiple sources, there were issues related to data consistency, quality, and reliability

- Data Modeling: Data warehouses introduced specific modeling techniques, like star schema and snowflake schema, designed for analytics and reporting.

Data Warehouses Shortcomings

At first, data warehousing seemed like an excellent solution. After all, they allowed organizations to store data in a single place and query it using SQL, a relatively simple language. The biggest downside was that traditional data warehouses were inefficient and expensive due to their limited scalability and high maintenance costs

But cracks started to show as data volumes grew exponentially.

Data Volumes: Then vs. Now

- Then: During the early days of data warehousing in the late 80s and early 90s, having a terabyte (TB) of data was considered huge. Most organizations dealt with gigabytes (GB) to terabytes of data.

- Now: With the advent of the digital age, social media, IoT devices, and more, data has exploded. Many enterprises now deal with petabytes (PB) of data, and big tech companies even handle exabytes (EB) of data.

The type of data changed, too. Thanks to developments in modern technology, the importance of unstructured data grew exponentialluy, with recent estimates indicating that unstructured data comprises over 80% of all enterprise data, with 95% of businesses prioritizing unstructured data management.

Traditional data warehouses simply couldn’t keep up with the rapid changes and demands of modern business, leading to a need for new data management solutions.

The Present: The Modern Data Stack

While some believe that traditional data warehousing might be heading towards extinction, others argue that it’s still relevant, just transforming to meet the needs of modern businesses. Remember that data warehouses emerged as a solution to the problem of handling the growth in data volumes and complexity. However, as data-driven operations grew more sophisticated, organizations had to look for a better way to manage their data.

- Late 2000s: The rise of big data brought about an exciting new era of data management. Data warehouses no longer had to handle all the data analysis alone, as new technologies such as Hadoop and Spark allowed companies to store and process vast amounts of data in a distributed manner, ushering in the age of the modern data stack.

- 2020s: Today, the modern data stack is rapidly evolving. Technologies like Data Lakes and Data Streams are emerging as more efficient solutions for Big Data. Machine learning and artificial intelligence are being used to automate some of the data management processes that were traditionally done manually. With cloud computing becoming increasingly accessible, companies can now access powerful tools at a significantly lower cost than before.

Modern data stack does not aim to replace traditional data warehouses. Instead, it offers an efficient, flexible, and scalable approach to handling vast volumes of structured and unstructured data. Data warehouses are a part of the modern data stack, serving as a centralized repository for the data that has been cleaned, transformed, and is ready for analysis.

Modern Data Stack: What, Why, and How?

Definition of the modern data stack

Simply put, a modern data stack is an end-to-end solution for managing data of any size or complexity. It typically includes components such as business intelligence (BI) tools, cloud computing platforms, and big data processing frameworks—all integrated to enable complex data analysis and reporting.

The modern data stack is a comprehensive architecture for collecting, storing, and analyzing large volumes of structured and unstructured digital data. It combines the power of distributed computing, machine learning, artificial intelligence, and NoSQL databases to allow organizations to store, query, analyze, and visualize vast amounts of complex data quickly and cost-effectively.

Components of the modern data stack

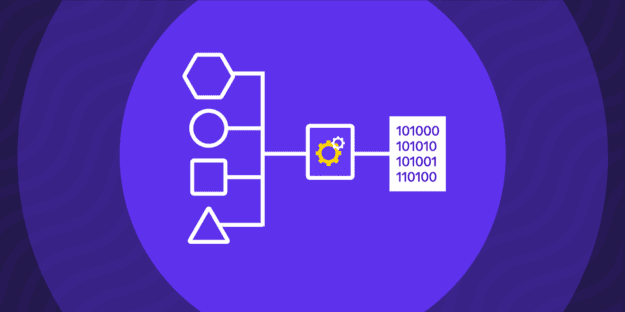

The modern data stack consists of five distinct components:

- Data Sources: This is where all the data originates from, including structured databases, unstructured files and documents, streaming sources like IoT devices, and more.

- Data Ingestion: This component is responsible for collecting data from various sources, normalizing it, and preparing it for storage in the system.

- Data Transformation & Storage: This component enables users to clean, filter, and transform the data into forms ready for analysis and visualization. ELT or ETL processes are used to move data from source systems into the data warehouse, data lake or data stream.

- Data Analysis: Finally, this is where organizations can use advanced analytics techniques like machine learning and AI to uncover insights from their data.

- Data Governance: Data governance is an important component of the modern data stack, as it enables organizations to manage and secure their data. It ensures that data is consistent across the system and can be trusted by users.

Benefits of The Modern Data Stack

The modern data stack integrates various components of data architecture into end-to-end pipelines, streamlining the process from data collection to analysis. This includes data sources, data ingestion, data transformation and storage (which involves data warehouses), data analysis, and data governance. By combining these elements, the modern data stack allows organizations to harness the full potential of their data, ultimately leading to more informed decision-making and strategic insights.

It’s important to note that these components are all integrated together, so you can quickly and easily move data between them. This means that data can be manipulated in real time with minimal effort, allowing you to glean insights much faster than ever before.

Moreover, the modern data stack offers a wide range of features that make it easier for teams to collaborate on data projects. From version control systems to workflow automation tools, the modern data stack provides everything you need to simplify and streamline your processes.

Challenges and Limitations of The Modern Data Stack

Overall, the modern data stack offers organizations a powerful platform for managing large volumes of structured and unstructured digital data. However, it does come with certain challenges:

- Complexity: The modern data stack is a complex architecture that requires specialized knowledge and skills to set up and manage effectively.

- Data silos and integration challenges: As data is collected from multiple sources and stored in multiple systems, it can often be difficult to integrate disparate datasets and ensure accuracy.

- Privacy and security concerns: As organizations store large volumes of sensitive data, they must ensure that their system is secure and compliant with regulatory requirements.

- Data governance challenges: Organizations must also manage user access and ensure their data is consistent and up-to-date. This can be difficult to manage in large, distributed systems.

- Scalability and performance issues: As the amount of data grows, so does the need for more powerful hardware and software. Organizations must ensure that their systems can handle increasing amounts of data without compromising performance.

The Future: New Technologies and Trends

The modern data stack has already come a long way, but there are still many exciting new tech and trends that will continue to shape the future of data management:

- Edge Computing: Edge computing is an increasingly popular trend in data processing as it allows organizations to process and analyze data closer to the source, increasing speed and accuracy.

- Containerization: Containers are becoming a popular tool for deploying applications and managing data in the cloud. By isolating apps into lightweight containers, organizations can reduce costs and increase flexibility.

- AI & Machine Learning: AI and machine learning technologies are quickly becoming integral components of the modern data stack, as they enable organizations to uncover deeper insights from their data.

- Cloud Adoption: As more businesses move their data to the cloud, they are also taking advantage of cloud-native technologies like serverless computing, which allows organizations to focus on developing applications without worrying about provisioning and managing servers.

- DataOps & MLOps: DataOps is an emerging DevOps methodology that focuses on streamlining collaboration between data scientists and engineers, while MLOps is a similar methodology for automating the deployment of production ML models.

These emerging technologies are quickly changing the landscape of data management and showing us how powerful the modern data stack can truly be. As more organizations adopt these tools in their data stacks, we will see an increasing number of new and exciting applications arise from this technology.

Evolving Data Stack: What’s Next?

Acumen Research and Consulting projects that the global data analytics market will reach $329.8 billion by 2030, growing at a compound annual growth rate of 29.9%. This represents a significant increase from $31.8 billion in 2021.

As humans increasingly rely on data to make decisions, it is clear that having an end-to-end modern data stack is invaluable for businesses looking to stay ahead of the competition. Building end-to-end data pipelines, from source to insight, will allow organizations to focus less on managing their data stacks and more on driving business outcomes from the insights they derive from the data.

According to the “CEO Excellence Survey” conducted by McKinsey & Co., 62% of CEOs consider developing advanced analytics as their top strategy for achieving digital disruption. This underscores the importance of investing in the modern data stack to ensure organizations can capitalize on the opportunities presented by emerging technologies.

Best Practices for Implementing the Modern Data Stack

Successfully implementing the modern data stack requires organizations to have a clear understanding of their objectives, resources, and what technology is needed to achieve those objectives. Here are some best practices for building out a successful modern data stack:

Identify business needs and goals

Start by identifying your business needs and goals. This includes understanding the types of data you need to collect, how it will be used, and what insights are most valuable. Once these needs have been identified, organization can then move on to selecting the right technology for their specific use case.

Building a strong data architecture

Focus on building a strong data architecture. This includes properly storing, organizing, and managing data so that it can be quickly and easily accessed when needed. Additionally, organizations should also carefully consider integrating their existing legacy systems with the new technology they plan to implement.

Ensuring data quality and governance

Ensure that the data is of high quality and governed properly. This includes regularly auditing datasets to identify any potential errors or inaccuracies that could affect the accuracy of insights generated from the data. Additionally, organizations should consider implementing a data governance strategy to ensure that all stakeholders can access and use data in accordance with security protocols and regulations.

Select the right technology stack

Finally, choose the right technology stack for your needs. This should include selecting a system that can scale with their requirements and is flexible enough for different types of data analysis and insights. Additionally, it’s important to consider the overall cost of implementation, including hardware costs and specialist personnel.

Here are the things to look out for:

- Automated pipeline building and management: Automation should be used to streamline the process of collecting, organizing and managing data. This will help reduce time-consuming manual tasks and ensure the data is accurate and up-to-date.

- Single platform for data management: Rather than having multiple systems for data storage, management, and analytics, organizations should consider using a single platform that allows them to integrate all of their data into one system. This will reduce complexity and ensure better visibility into the data.

- Proactive security measures: As organizations store and manage large amounts of sensitive data, it is important to have adequate security measures in place.

- Connectivity to a wide range of data sources: It is important to ensure that the modern data stack offers the ability to connect with a wide range of data sources, including both structured and unstructured data.

- Ease of use: The modern data stack should also be easy to use and understand for all stakeholders, including non-technical personnel.

By following these best practices, organizations will be able to ensure that they have an efficient, secure, and cost-effective modern data stack in place that is capable of delivering powerful insights.

The Future Outlook for The Modern Data Stack

As organizations rely more on data-driven insights, the modern data stack will likely continue to evolve. However, one trend is clear – organizations must focus on building flexible and scalable data pipelines that allow them to quickly and easily access their data, no matter where it is stored or how complex it may be.

Minimize the firefighting. Maximize ROI on pipelines.