ETL (Extraction-transformation-load) is quite popular among modern businesses for extracting and transforming data from various sources and loading it into a single storage system or cloud-based data lake.

As the name suggests, data warehouse ETL is a process that extracts, transforms, and loads data into a single targeted data warehouse. In fact, the ETL process offers multiple benefits, such as increased security, improved data quality, and accessibility.

Here, we’ll break down for you the ETL process in a data warehouse, its workflow, phases, and the characteristics of the staging area. Let’s extract, transform and load!

What Is ETL Process In A Data Warehouse?

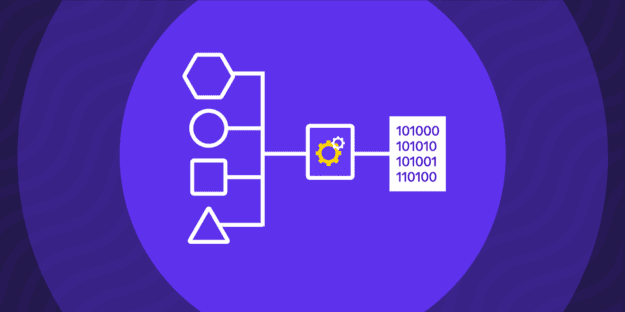

If you ask yourself, “What is ETL processing?” the most straightforward answer would be extracting data from various storage systems and sources, transforming it into a suitable format, and loading them into the targeted database.

The first step of the data ETL process is the various data extraction, such as flat files or data from SQL servers and RDBMS (Relational Database Management Systems). The next step is transformation, when you cleanse, join, filter, split, or sort the data to achieve the desired format.

Lastly, loading. At this stage, you load the data into your organization’s Enterprise Data Warehouse (EDW), Operational Data Store (ODS), or data mart. The ETL process in data warehouse conducts the last step—loading—when the data is extracted and processed, unlike the ELT process that does it before the transformation.

It’s essential to know that the ETL process in data warehouse is a cyclical and international data migration and integration method, which you should re-run every time you add new data.

Data Extraction

As we mentioned, data extraction is the first step of data warehouse ETL. It includes phases like locating and extracting the initial data and is essential for cloud-based data migration and integration.

That’s why professional companies like Rivery offer many ETL tools for this purpose. These can help you connect to almost all API sources, provide code-based support, and enable reverse ETL for data extraction purposes.

Extraction Methods In Data Warehouse

The data warehouse ETL extraction process can include various methods, such as logical and physical extraction. Logical extraction in data warehouses usually applies to mobile devices, like cell phones and laptops. It uses APIs (Application Programming Interfaces) to extract all the data from the source in a single go.

We can further divide this data warehouse ETL process into full and incremental extraction. Full-logical extraction is the complete data extraction from a single storage system, such as an app, a cloud platform, or other software, without the need for a tracking source system. On the other hand, incremental-logical extraction is when, after the initial extraction, you extract all the new, added, or altered information.

Contrary to it, physical extraction focuses on the deleted or outdated but relevant files. Physical extraction can be online or offline, depending on whether you extract the data within or outside the source.

Extracting Changed Data

The changed data extraction process is usually an automated ETL process conducted after the initial load into the data warehouse. During the extraction process of changed data, you can apply different extraction methods, such as scrapping, elimination, cleansing, or audit columns.

In this case, your ETL tools will help you tailor predefined algorithms, classes, or clusters to extract new or altered data, transform it, and load it into the targeted data warehouse. This is also true for cloud data warehouses. That’s why if you want automatic and instant syncing of changed data, you should turn to ETL companies like Rivery!

Data Transformation

Data transformation is the second step of the data warehouse ETL process. Depending on the techniques and tools, implementing this step can differ. The following’s a brief example of the data transformation implementation process.

How To Implement Transformation?

Firstly, data transformation is tightly connected to the automated ETL process. That’s why you must implement various transformation tools to transform the data from the operational source format to the desired target data warehouse format.

The transformation process consists of a few simple steps: discover data, map data, generate code, execute code, and review. Other optional operational actions may include cleansing, filtering, breaking down columns, and removing duplicates.

Data Loading

Data loading is the last step of the data warehouse ETL process. In this phase, you load the data into the storage system or the data lake. It’s essential to ensure that the loading methods are correct and have as little scope as possible.

Moreover, there’re two main aspects you should be careful about—refreshing and updating data. Depending on the scope of the changes, you can refresh when you write down new data in the targeted warehouse, while you can implement updates if there are changes in the existing data pool.

Flat Files

Flat files contain data in plain text format and are among the most common data objects of the data warehouse ETL process. Here are the two most common cases of processing flat files in ETL—processing fixed length and delimited flat files.

Processing Fixed Length Flat Files in Data Warehousing

Contrary to relational databases, fixed-length flat files contain fixed-width data tables. They use ordinal positions you’d later utilize to identify the fields in the record. They are great for smaller databases with a few requirements and configuration options. However, it’s essential to gather them in a single data pool from which you can manage their attributes, such as type, representation, value, length, and nullness.

Processing Delimited Flat Files in Data Warehousing

Delimited flat files are data that have marks at the end of each particular record. These marks can be commas, tabs, or semicolons. For example, comma-separated values (CSVs) are stored in tabular form, while the tab-delimited ones are stored in separate fields where each record is connected to a single line.

Purpose Of Staging Area

The purpose of the staging area or the landing zone is to act as an intermediary storage or data pool during the data warehouse ETL process. The staging area is crucial because it interconnects the source and the targeted system, holds raw data, makes space for transitory transformational tables, and enables easy source system queries.

Designing The Staging Area

You can design an external staging area (like cloud-based systems) for complex transformation, huge chunks of data, and real-time processing. On the other hand, you can design an internal staging area (like the modern unified cloud data warehouses) to reduce the complexity, index tables, separate data in “marts,” and create “views. When you design the staging area, you should keep an eye on a few design aspects:

- The ETL team must own the staging area;

- Users shouldn’t have access to the staging area;

- ETL tools and operations should be able to operate the staging area’s files and data;

- You shouldn’t use the staging area for generating reports.

When you design or choose a staging area, you should be sure that it can provide you with data integration tools that support cleansing, aggregation, and replication features.

ETL Process Flow

The five elementary steps of the data ETL process flow are extraction, cleaning, transformation, loading, and analysis. A frequent example of the ETL process flow within a company would be connecting to multiple sources, including CRMs and ERPs, extracting batches of files, copying data to the staging area, transforming, and loading the data into the targeted warehouse.

Final Thoughts

The main things to consider when learning about the ETL process are the phases, various approaches and techniques, and the importance of staging areas as intermediaries. Once you’ve understood all of this and implemented it accordingly, you won’t have a problem conducting the ETL process.

Minimize the firefighting. Maximize ROI on pipelines.